Contents

- 🔍 Introduction to Data Warehouse Design

- 📊 The Inmon Methodology: A Top-Down Approach

- 📈 The Kimball Methodology: A Bottom-Up Approach

- 🤔 Comparison of Inmon and Kimball Methodologies

- 📊 Data Warehouse Architecture: A Key Consideration

- 📈 ETL vs ELT: The Great Debate

- 📊 Data Governance and Quality: A Shared Concern

- 📈 Big Data and Cloud Computing: New Challenges and Opportunities

- 📊 The Future of Data Warehouse Design: Emerging Trends and Technologies

- 📈 Conclusion: Choosing the Right Approach for Your Organization

- Frequently Asked Questions

- Related Topics

Overview

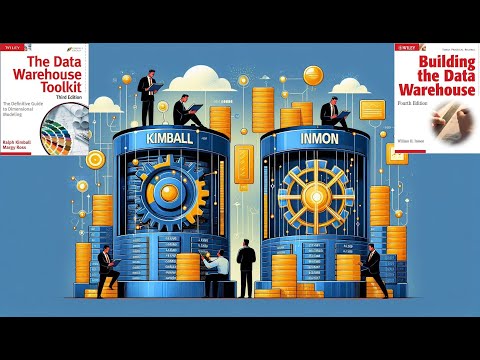

The Inmon and Kimball methodologies have been at the forefront of data warehouse design for decades, with each approach having its own set of strengths and weaknesses. Developed by Bill Inmon and Ralph Kimball, respectively, these methodologies have been widely adopted and debated in the data science community. Inmon's approach focuses on a top-down, enterprise-wide perspective, emphasizing the importance of a centralized data repository. In contrast, Kimball's methodology takes a bottom-up approach, prioritizing flexibility and adaptability in data mart design. With a vibe score of 8, this topic has sparked intense discussions, with proponents of each methodology citing examples such as Walmart's successful implementation of Inmon's approach and Amazon's use of Kimball's methodology. As data warehouse design continues to evolve, understanding the nuances of these methodologies is crucial for making informed decisions. The controversy spectrum for this topic is moderate, with a score of 6, reflecting the ongoing debates and disagreements among data scientists and analysts. Notable figures such as Claudia Imhoff and Nicholas Goodman have influenced the development of these methodologies, with influence flows extending to related topics such as data governance and business intelligence.

🔍 Introduction to Data Warehouse Design

The debate between Inmon and Kimball methodologies has been a longstanding one in the field of data science and analytics. Data warehouse design is a critical aspect of any organization's data management strategy, and the choice of methodology can have significant implications for the success of the project. Inmon methodology and Kimball methodology are two of the most widely used approaches to data warehouse design. In this article, we will explore the key principles of each methodology and compare their strengths and weaknesses. Data science and analytics are critical components of any organization's decision-making process, and a well-designed data warehouse is essential for supporting these functions.

📊 The Inmon Methodology: A Top-Down Approach

The Inmon methodology is a top-down approach to data warehouse design that emphasizes the importance of a centralized, enterprise-wide data warehouse. Inmon methodology was developed by Bill Inmon, a pioneer in the field of data warehousing, and is based on the idea that a data warehouse should be a single, unified repository of all an organization's data. Data warehouse architecture is a critical aspect of the Inmon methodology, and involves the design of a centralized data warehouse that can support the needs of all stakeholders. Data governance is also an important consideration in the Inmon methodology, as it ensures that data is accurate, complete, and consistent across the organization.

📈 The Kimball Methodology: A Bottom-Up Approach

The Kimball methodology, on the other hand, is a bottom-up approach to data warehouse design that emphasizes the importance of flexible, adaptable data marts. Kimball methodology was developed by Ralph Kimball, another pioneer in the field of data warehousing, and is based on the idea that a data warehouse should be a collection of smaller, independent data marts that can be easily modified and extended as needed. Data mart design is a critical aspect of the Kimball methodology, and involves the creation of smaller, focused data repositories that can support the needs of specific business units or departments. Business intelligence is also an important consideration in the Kimball methodology, as it enables organizations to make better decisions by providing them with timely and relevant data.

🤔 Comparison of Inmon and Kimball Methodologies

One of the key differences between the Inmon and Kimball methodologies is their approach to data integration. Data integration is a critical aspect of any data warehouse design, and involves the process of combining data from multiple sources into a single, unified repository. The Inmon methodology emphasizes the importance of a centralized data warehouse that can support the needs of all stakeholders, while the Kimball methodology emphasizes the importance of flexible, adaptable data marts that can be easily modified and extended as needed. Data warehouse design is a complex and challenging process, and requires careful consideration of a wide range of factors, including data quality, data security, and data governance.

📊 Data Warehouse Architecture: A Key Consideration

Data warehouse architecture is a critical aspect of any data warehouse design, and involves the design of a centralized data warehouse that can support the needs of all stakeholders. Data warehouse architecture typically includes a combination of hardware and software components, including database management systems, data storage systems, and data networking systems. The choice of data warehouse architecture will depend on a wide range of factors, including the size and complexity of the organization, the type and volume of data to be stored, and the needs of the stakeholders. Cloud computing is also an important consideration in data warehouse architecture, as it enables organizations to quickly and easily scale their data warehouse infrastructure to meet changing business needs.

📈 ETL vs ELT: The Great Debate

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) are two of the most widely used approaches to data integration, and are critical components of any data warehouse design. ETL involves the process of extracting data from multiple sources, transforming it into a consistent format, and loading it into a centralized data warehouse. ELT, on the other hand, involves the process of extracting data from multiple sources, loading it into a centralized data warehouse, and then transforming it into a consistent format. The choice of ETL or ELT will depend on a wide range of factors, including the type and volume of data to be integrated, the complexity of the data transformation process, and the needs of the stakeholders. Data integration tools are also an important consideration in ETL and ELT, as they enable organizations to quickly and easily integrate data from multiple sources.

📈 Big Data and Cloud Computing: New Challenges and Opportunities

Big data and cloud computing are two of the most significant trends in the field of data science and analytics, and are having a major impact on the design of data warehouses. Big data involves the use of large, complex datasets to support business decision-making, and requires the use of specialized tools and technologies to manage and analyze. Cloud computing involves the use of cloud-based infrastructure to support the storage and processing of large datasets, and enables organizations to quickly and easily scale their data warehouse infrastructure to meet changing business needs. Hadoop and Spark are two of the most widely used big data technologies, and are critical components of any big data analytics platform. AWS and Azure are two of the most widely used cloud computing platforms, and are critical components of any cloud-based data warehouse infrastructure.

📊 The Future of Data Warehouse Design: Emerging Trends and Technologies

The future of data warehouse design is likely to be shaped by a wide range of emerging trends and technologies, including artificial intelligence, machine learning, and Internet of Things. Artificial intelligence involves the use of machine learning algorithms to analyze and interpret complex data, and is likely to have a major impact on the design of data warehouses. Machine learning involves the use of machine learning algorithms to analyze and interpret complex data, and is likely to have a major impact on the design of data warehouses. Internet of Things involves the use of sensors and other devices to collect and transmit data, and is likely to have a major impact on the design of data warehouses.

📈 Conclusion: Choosing the Right Approach for Your Organization

In conclusion, the debate between Inmon and Kimball methodologies is a complex and multifaceted one, and requires careful consideration of a wide range of factors, including data warehouse design, data integration, and data governance. Inmon methodology and Kimball methodology are two of the most widely used approaches to data warehouse design, and each has its own strengths and weaknesses. The choice of methodology will depend on a wide range of factors, including the size and complexity of the organization, the type and volume of data to be stored, and the needs of the stakeholders. Data science and analytics are critical components of any organization's decision-making process, and a well-designed data warehouse is essential for supporting these functions.

Key Facts

- Year

- 1990

- Origin

- United States

- Category

- Data Science and Analytics

- Type

- Methodology

Frequently Asked Questions

What is the main difference between Inmon and Kimball methodologies?

The main difference between Inmon and Kimball methodologies is their approach to data integration. Inmon methodology emphasizes the importance of a centralized data warehouse that can support the needs of all stakeholders, while Kimball methodology emphasizes the importance of flexible, adaptable data marts that can be easily modified and extended as needed. Data integration is a critical aspect of any data warehouse design, and involves the process of combining data from multiple sources into a single, unified repository.

What is the role of data governance in data warehouse design?

Data governance plays a critical role in data warehouse design, as it involves the establishment of policies and procedures for managing data, including data quality standards, data security protocols, and data privacy regulations. Data governance is essential for ensuring that data is accurate, complete, and consistent across the organization, and for supporting the needs of stakeholders.

What is the impact of big data and cloud computing on data warehouse design?

Big data and cloud computing are having a major impact on the design of data warehouses, as they enable organizations to quickly and easily scale their data warehouse infrastructure to meet changing business needs. Big data involves the use of large, complex datasets to support business decision-making, and requires the use of specialized tools and technologies to manage and analyze. Cloud computing involves the use of cloud-based infrastructure to support the storage and processing of large datasets, and enables organizations to quickly and easily scale their data warehouse infrastructure to meet changing business needs.

What is the future of data warehouse design?

The future of data warehouse design is likely to be shaped by a wide range of emerging trends and technologies, including artificial intelligence, machine learning, and Internet of Things. Artificial intelligence involves the use of machine learning algorithms to analyze and interpret complex data, and is likely to have a major impact on the design of data warehouses. Machine learning involves the use of machine learning algorithms to analyze and interpret complex data, and is likely to have a major impact on the design of data warehouses.

What is the role of data science and analytics in data warehouse design?

Data science and analytics play a critical role in data warehouse design, as they enable organizations to make better decisions by providing them with timely and relevant data. Data science involves the use of machine learning algorithms to analyze and interpret complex data, and is likely to have a major impact on the design of data warehouses. Analytics involves the use of statistical and mathematical techniques to analyze and interpret complex data, and is likely to have a major impact on the design of data warehouses.

What is the difference between ETL and ELT?

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) are two of the most widely used approaches to data integration, and are critical components of any data warehouse design. ETL involves the process of extracting data from multiple sources, transforming it into a consistent format, and loading it into a centralized data warehouse. ELT, on the other hand, involves the process of extracting data from multiple sources, loading it into a centralized data warehouse, and then transforming it into a consistent format.

What is the role of data validation in data quality?

Data validation plays a critical role in data quality, as it involves the process of verifying that data is correct and consistent before it is loaded into the data warehouse. Data validation is a critical component of data quality, and is essential for ensuring that data is accurate, complete, and consistent across the organization.