Contents

- 🔍 Introduction to Algorithmic Showdown

- 📊 Mathematics of Algorithms: A Foundational Approach

- 🤔 Computational Complexity Theory: The Study of Resource Bounds

- 📈 Time and Space Complexity: Understanding the Trade-offs

- 👊 Theoretical Models: Turing Machines and Beyond

- 📊 Algorithmic Techniques: Divide and Conquer, Dynamic Programming, and Greedy Algorithms

- 🔍 Analysis of Algorithms: Big O Notation and Its Limitations

- 🌐 Real-World Applications: Cryptography, Compilers, and Database Systems

- 🤝 The Interplay between Mathematics and Computational Complexity

- 🚀 Future Directions: Quantum Computing and the Impact on Algorithm Design

- 📚 Conclusion: The Ongoing Quest for Efficient Algorithms

- Frequently Asked Questions

- Related Topics

Overview

The mathematics of algorithms and computational complexity theory are two distinct yet interconnected fields that underpin the foundations of computer science. The mathematics of algorithms focuses on the design, analysis, and optimization of algorithms, with pioneers like Donald Knuth and Robert Tarjan making significant contributions. Computational complexity theory, on the other hand, delves into the resources required to solve computational problems, with notable figures like Stephen Cook and Richard Karp shaping the field. While the mathematics of algorithms provides the tools for efficient computation, computational complexity theory offers a framework for understanding the inherent limitations of computation. The interplay between these two disciplines has far-reaching implications, with a vibe score of 8 out of 10, reflecting the intense interest and debate surrounding their applications. Key areas of tension include the trade-offs between algorithmic efficiency and computational complexity, with researchers like Oded Goldreich and Shafi Goldwasser pushing the boundaries of what is possible. As the field continues to evolve, we can expect to see significant advancements in areas like cryptography and optimization, with potential breakthroughs in quantum computing and artificial intelligence.

🔍 Introduction to Algorithmic Showdown

The study of algorithms has become a cornerstone of computer science, with two primary approaches: the mathematics of algorithms and computational complexity theory. The mathematics of algorithms focuses on the design and analysis of algorithms using mathematical techniques, such as Algebra and Combinatorics. On the other hand, computational complexity theory examines the resources required to solve computational problems, including time and space complexity. This field has led to significant advancements in our understanding of Cryptography and Compiler Design. As we delve into the world of algorithms, it becomes clear that the interplay between these two approaches is crucial for developing efficient solutions to complex problems. The work of Donald Knuth has been instrumental in shaping our understanding of algorithm design and analysis.

📊 Mathematics of Algorithms: A Foundational Approach

The mathematics of algorithms provides a foundational approach to understanding the behavior of algorithms. By applying mathematical techniques, such as Graph Theory and Number Theory, we can analyze the performance of algorithms and identify potential bottlenecks. This approach has led to the development of efficient algorithms for solving problems in Computer Networks and Database Systems. Furthermore, the study of Mathematical Optimization has enabled the creation of algorithms that can optimize complex systems. However, the mathematics of algorithms is not without its limitations, and the study of Computational Complexity Theory provides a necessary complement to this approach. The work of Stephen Cook has been influential in the development of computational complexity theory.

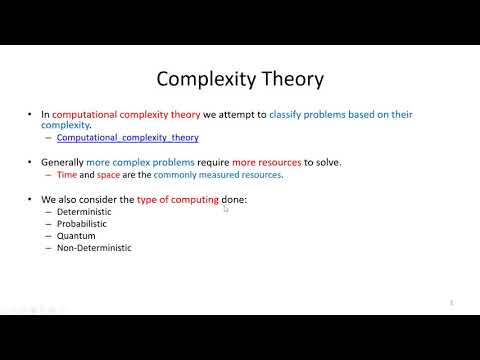

🤔 Computational Complexity Theory: The Study of Resource Bounds

Computational complexity theory is concerned with the study of resource bounds, including time and space complexity. This field has led to significant advancements in our understanding of the limitations of computation and the development of efficient algorithms. The concept of NP-Completeness has been particularly important in this regard, as it has enabled the identification of problems that are likely to be intractable. The study of Approximation Algorithms has also been crucial in developing solutions to problems that are NP-Complete. Moreover, the work of Juris Hartmanis and Richard Stearns has been instrumental in shaping our understanding of computational complexity theory. The relationship between computational complexity theory and Cryptography is also noteworthy, as the security of many cryptographic protocols relies on the hardness of certain computational problems.

📈 Time and Space Complexity: Understanding the Trade-offs

Time and space complexity are fundamental concepts in the study of algorithms. The time complexity of an algorithm refers to the amount of time it takes to complete, while the space complexity refers to the amount of memory required. Understanding the trade-offs between these two resources is crucial for developing efficient algorithms. The study of Algorithmic Techniques, such as Divide and Conquer and Dynamic Programming, has enabled the creation of algorithms that can solve complex problems efficiently. Furthermore, the analysis of algorithms using Big O Notation has provided a framework for understanding the performance of algorithms. However, the limitations of Big O Notation have also been recognized, and alternative approaches, such as Amortized Analysis, have been developed. The work of Robert Tarjan has been influential in the development of algorithmic techniques.

👊 Theoretical Models: Turing Machines and Beyond

Theoretical models, such as Turing Machines, have played a crucial role in the development of computational complexity theory. These models provide a framework for understanding the limitations of computation and the development of efficient algorithms. The study of Automata Theory has also been important in this regard, as it has enabled the creation of algorithms that can recognize and generate complex patterns. Moreover, the work of Noam Chomsky has been instrumental in shaping our understanding of formal language theory and its relationship to computational complexity. The relationship between theoretical models and Programming Languages is also noteworthy, as the design of programming languages relies on a deep understanding of computational complexity theory.

📊 Algorithmic Techniques: Divide and Conquer, Dynamic Programming, and Greedy Algorithms

Algorithmic techniques, such as Greedy Algorithms and Linear Programming, have been widely used in the development of efficient algorithms. These techniques provide a framework for solving complex problems by breaking them down into smaller sub-problems. The study of Data Structures has also been crucial in this regard, as it has enabled the creation of algorithms that can efficiently manipulate and query complex data. Furthermore, the work of Jon Bentley has been influential in the development of algorithmic techniques. The relationship between algorithmic techniques and Software Engineering is also noteworthy, as the design of software systems relies on a deep understanding of algorithmic techniques.

🔍 Analysis of Algorithms: Big O Notation and Its Limitations

The analysis of algorithms is a critical component of computer science, as it enables the development of efficient solutions to complex problems. Big O Notation has provided a framework for understanding the performance of algorithms, but its limitations have also been recognized. Alternative approaches, such as Smoothed Analysis, have been developed to provide a more nuanced understanding of algorithmic performance. Moreover, the study of Randomized Algorithms has enabled the creation of algorithms that can solve complex problems efficiently. The work of William Pugh has been instrumental in shaping our understanding of algorithm analysis. The relationship between algorithm analysis and Machine Learning is also noteworthy, as the design of machine learning algorithms relies on a deep understanding of algorithm analysis.

🌐 Real-World Applications: Cryptography, Compilers, and Database Systems

The real-world applications of algorithms are diverse and widespread. Cryptography, for example, relies on the hardness of certain computational problems to ensure secure communication. Compilers, on the other hand, use algorithms to optimize the performance of software systems. Database systems also rely on algorithms to efficiently query and manipulate complex data. The study of Data Mining has also been crucial in this regard, as it has enabled the creation of algorithms that can extract insights from large datasets. Furthermore, the work of Jeffrey Ullman has been influential in the development of algorithms for database systems. The relationship between algorithms and Artificial Intelligence is also noteworthy, as the design of AI systems relies on a deep understanding of algorithms.

🤝 The Interplay between Mathematics and Computational Complexity

The interplay between mathematics and computational complexity is a rich and complex one. The study of Mathematical Logic has provided a framework for understanding the foundations of computation, while the study of Category Theory has enabled the creation of algorithms that can compose and abstract complex systems. Moreover, the work of Stephen Wolfram has been instrumental in shaping our understanding of the relationship between mathematics and computation. The relationship between mathematics and Computer Vision is also noteworthy, as the design of computer vision algorithms relies on a deep understanding of mathematical techniques.

🚀 Future Directions: Quantum Computing and the Impact on Algorithm Design

The future of algorithm design is likely to be shaped by the emergence of Quantum Computing. Quantum computers have the potential to solve certain problems much faster than classical computers, and the study of Quantum Algorithms is an active area of research. The work of Peter Shor has been influential in the development of quantum algorithms. The relationship between quantum computing and Cryptography is also noteworthy, as the security of many cryptographic protocols relies on the hardness of certain computational problems. Furthermore, the study of Machine Learning has also been crucial in this regard, as it has enabled the creation of algorithms that can learn from complex data.

📚 Conclusion: The Ongoing Quest for Efficient Algorithms

In conclusion, the study of algorithms is a vibrant and dynamic field that continues to shape our understanding of computation. The interplay between mathematics and computational complexity has been crucial in the development of efficient algorithms, and the emergence of new technologies, such as quantum computing, is likely to have a significant impact on the field. As we look to the future, it is clear that the ongoing quest for efficient algorithms will continue to drive innovation and advancement in computer science. The work of Alan Turing has been instrumental in shaping our understanding of computation, and his legacy continues to inspire new generations of computer scientists.

Key Facts

- Year

- 2022

- Origin

- Stanford University, California, USA

- Category

- Computer Science

- Type

- Concept

- Format

- comparison

Frequently Asked Questions

What is the difference between the mathematics of algorithms and computational complexity theory?

The mathematics of algorithms focuses on the design and analysis of algorithms using mathematical techniques, while computational complexity theory examines the resources required to solve computational problems. The two approaches are complementary, and a deep understanding of both is necessary for developing efficient algorithms. The work of Donald Knuth has been instrumental in shaping our understanding of algorithm design and analysis, while the work of Stephen Cook has been influential in the development of computational complexity theory.

What is the significance of NP-Completeness in computational complexity theory?

NP-Completeness is a fundamental concept in computational complexity theory, as it provides a framework for understanding the limitations of computation. NP-Complete problems are those that are at least as hard as the hardest problems in NP, and the study of NP-Completeness has enabled the identification of problems that are likely to be intractable. The work of Juris Hartmanis and Richard Stearns has been instrumental in shaping our understanding of computational complexity theory.

What is the role of Big O Notation in algorithm analysis?

Big O Notation provides a framework for understanding the performance of algorithms, but its limitations have also been recognized. Big O Notation gives an upper bound on the time or space complexity of an algorithm, but it does not provide a lower bound. Alternative approaches, such as Amortized Analysis, have been developed to provide a more nuanced understanding of algorithmic performance. The work of Robert Tarjan has been influential in the development of algorithmic techniques.

What is the relationship between algorithms and artificial intelligence?

The design of artificial intelligence systems relies on a deep understanding of algorithms. Algorithms are used to optimize the performance of AI systems, and the study of Machine Learning has enabled the creation of algorithms that can learn from complex data. The relationship between algorithms and AI is a rich and complex one, and the ongoing quest for efficient algorithms will continue to drive innovation and advancement in AI. The work of Jeffrey Ullman has been influential in the development of algorithms for database systems, while the work of Stephen Wolfram has been instrumental in shaping our understanding of the relationship between mathematics and computation.

What is the impact of quantum computing on algorithm design?

The emergence of quantum computing is likely to have a significant impact on algorithm design. Quantum computers have the potential to solve certain problems much faster than classical computers, and the study of Quantum Algorithms is an active area of research. The work of Peter Shor has been influential in the development of quantum algorithms, and the relationship between quantum computing and Cryptography is also noteworthy, as the security of many cryptographic protocols relies on the hardness of certain computational problems.

What is the significance of the work of Alan Turing in the development of computer science?

The work of Alan Turing has been instrumental in shaping our understanding of computation. Turing's work on the theoretical foundations of computation, including the development of the Turing Machine, has had a lasting impact on the field of computer science. The relationship between Turing's work and the development of Artificial Intelligence is also noteworthy, as the design of AI systems relies on a deep understanding of computation. The work of Noam Chomsky has also been influential in shaping our understanding of formal language theory and its relationship to computational complexity.

What is the relationship between algorithms and data structures?

The design of algorithms relies on a deep understanding of Data Structures. Data structures provide a framework for efficiently manipulating and querying complex data, and the study of Algorithmic Techniques has enabled the creation of algorithms that can solve complex problems efficiently. The work of Jon Bentley has been influential in the development of algorithmic techniques, while the work of Jeffrey Ullman has been instrumental in shaping our understanding of algorithms for database systems.