Contents

Overview

The roots of computer science stretch back to antiquity, with early civilizations developing tools for calculation. The abacus, used in Mesopotamia, represents one of the earliest known calculating devices. Later, Heron of Alexandria described mechanical automata, hinting at programmable machines. The 17th century saw Blaise Pascal invent the Pascaline, a mechanical calculator, followed by Gottfried Wilhelm Leibniz's Stepped Reckoner. The true conceptual leap towards modern computing, however, began with Charles Babbage's Analytical Engine, a design for a general-purpose mechanical computer, and Ada Lovelace's recognition of its potential beyond mere calculation, often considered the first computer programmer.

⚙️ How It Works

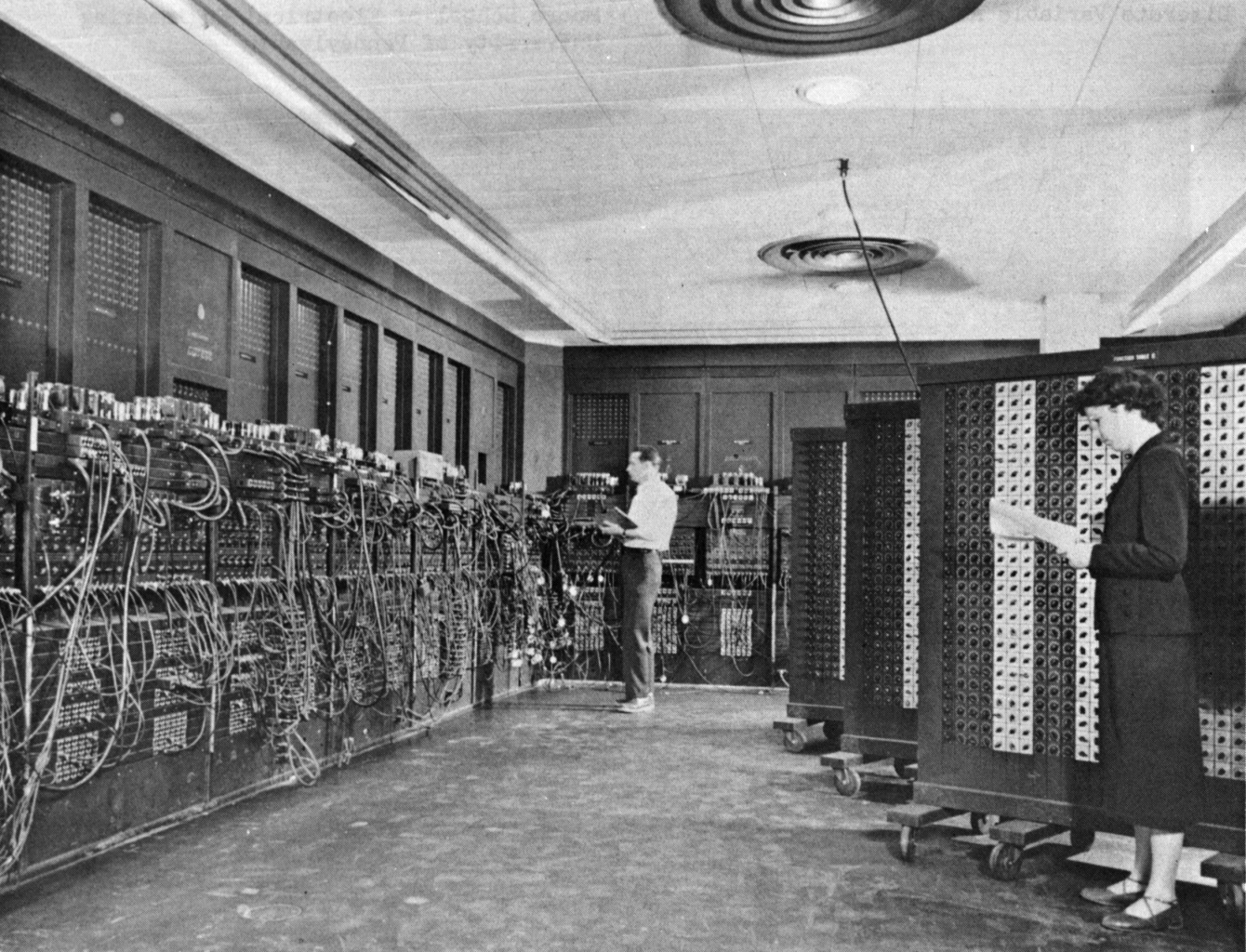

Modern computer science operates on the principles of algorithms and data structures, abstract models of computation that can be implemented on physical hardware. The theoretical underpinnings were solidified by Alan Turing's concept of the Turing machine, a mathematical model of computation that defines an abstract machine capable of simulating any computer algorithm. This theoretical framework, combined with advancements in electronics and digital circuitry, led to the development of the first electronic digital computers like the ENIAC and the UNIVAC I in the mid-20th century. The Von Neumann architecture, proposed by John von Neumann, became a foundational design, outlining how computers could store both programs and data in memory.

📊 Key Facts & Numbers

The field has seen exponential growth. The first electronic general-purpose computer, ENIAC, was built by J. Presper Eckert and John Mauchly. By 1971, Intel released the Intel 4004, the first commercially available microprocessor, packing thousands of transistors onto a single chip. Today, a modern smartphone, costing under $1,000, possesses processing power millions of times greater than ENIAC. The global software market was valued at over $600 billion in 2023, and the number of internet users surpassed 5.3 billion by early 2024, demonstrating the pervasive reach of computational technologies.

👥 Key People & Organizations

Key figures like Charles Babbage and Ada Lovelace laid the conceptual groundwork in the 19th century. The 20th century saw theoretical giants such as Alan Turing, John von Neumann, and Kurt Gödel formalize the principles of computation and logic. Pioneers in hardware include J. Presper Eckert and John Mauchly, who built the ENIAC, and Ted Hoff at Intel, who co-invented the microprocessor. In software, Grace Hopper developed the first compiler, and Dennis Ritchie and Ken Thompson created the C programming language and UNIX operating system at Bell Labs. Major organizations like IBM, Microsoft, and Google have been instrumental in developing and commercializing computer science advancements.

🌍 Cultural Impact & Influence

Computer science has profoundly reshaped nearly every facet of human existence. The advent of the internet and the World Wide Web in the late 20th century revolutionized communication, commerce, and information access, creating globalized markets and new forms of social interaction. Fields like artificial intelligence, machine learning, and data science are driving innovation in medicine, finance, and entertainment. The digital transformation has also influenced art, music, and literature, giving rise to new creative mediums and distribution channels, from digital art to streaming media.

⚡ Current State & Latest Developments

The field continues its rapid evolution, with current developments heavily focused on artificial intelligence, particularly large language models like GPT-4 and Gemini, and their integration into everyday applications. Advancements in quantum computing promise to solve problems currently intractable for classical computers. The expansion of edge computing allows for more localized data processing, reducing latency for applications like autonomous vehicles and IoT devices. Cybersecurity remains a critical and evolving area, with constant innovation needed to counter increasingly sophisticated threats.

🤔 Controversies & Debates

Debates persist regarding the ethical implications of AI, including bias in algorithms, job displacement, and the potential for misuse. The concentration of power within a few major tech corporations like Google, Meta, and Amazon raises concerns about market monopolization and data privacy. The digital divide, the gap between those with and without access to computing technology and the internet, remains a persistent global challenge. Furthermore, the environmental impact of massive data centers and the energy consumption of cryptocurrency mining are subjects of ongoing scrutiny.

🔮 Future Outlook & Predictions

The future of computer science points towards increasingly intelligent and interconnected systems. Expect further breakthroughs in AI, potentially leading to artificial general intelligence (AGI). Quantum computing is poised to revolutionize fields like drug discovery and materials science. The Internet of Things will continue to expand, embedding computational capabilities into more objects and environments. Advances in human-computer interaction, including virtual reality and augmented reality, will likely blur the lines between the physical and digital worlds, creating new paradigms for work, education, and entertainment.

💡 Practical Applications

Computer science principles are now embedded in countless practical applications. They power the internet and the World Wide Web, enabling global communication and commerce. Software is ubiquitous, from operating systems on personal computers and smartphones to complex enterprise resource planning (ERP) systems. Data science and analytics are used to derive insights from vast datasets in fields ranging from marketing to scientific research. Computer graphics are essential for film, gaming, and design, while cryptography underpins secure online transactions and data protection.

Key Facts

- Category

- history

- Type

- concept